Multi-Agent AI Use Cases: A Deployment Maturity Audit

Table of Contents

Enterprise interest in multi-agent AI has grown faster than the evidence supporting most of its use cases. Individual AI agents are already well understood: what they are, how they reason, and what they can do autonomously. The harder question is what happens when multiple agents coordinate at scale.

That is where deployment reality diverges from vendor claims. This article audits multi-agent AI use cases by maturity: what is running in production, what is in credible pilots, and what is not yet ready for enterprise commitment.

From Single Agent to Multi-Agent: What Actually Changes

A multi-agent AI system is a network of individual AI agents that divide a complex task into parts, each handled by a specialized agent, coordinated toward a shared outcome.

What changes at the system level is scope and structure. A single agent handles a task end-to-end within its own process. A multi-agent system breaks that task into components, assigns each to an agent built for that specific function, and introduces an orchestration layer to manage sequencing, communication, and conflict resolution between agents.

Three architectural shifts distinguish multi-agent systems from single-agent deployments:

Task decomposition. Work that exceeds a single agent's context window, tool access, or reasoning capacity gets split into bounded subtasks. Each subtask is routed to the agent best equipped to handle it.

Inter-agent communication. Agents share outputs, pass state, and in some architectures, negotiate over decisions. This happens through message passing, shared memory stores, or event-driven triggers — depending on the framework.

Fault tolerance through redundancy. When one agent in a multi-agent system fails or produces a low-confidence output, another can verify, retry, or escalate — without stopping the entire workflow. A single agent has no such fallback built into its architecture.

The table below shows where the two approaches differ in practice:

Dimension | Single Agent | Multi-Agent System |

Task scope | Defined, bounded | Complex, decomposable |

Specialization | Generalist across steps | Specialized per function |

Scalability | Limited by one context window | Scales by adding agents |

Fault tolerance | Single point of failure | Redundancy across agents |

Coordination overhead | None | Significant — requires orchestration |

Cost profile | Predictable | Variable — compounds with agent count |

The coordination overhead and cost profile rows matter more than most introductory content acknowledges. Multi-agent AI systems are not a straightforward upgrade from single-agent deployments — they introduce complexity in orchestration, observability, debugging, and inference cost that only justifies itself when the task genuinely requires it.

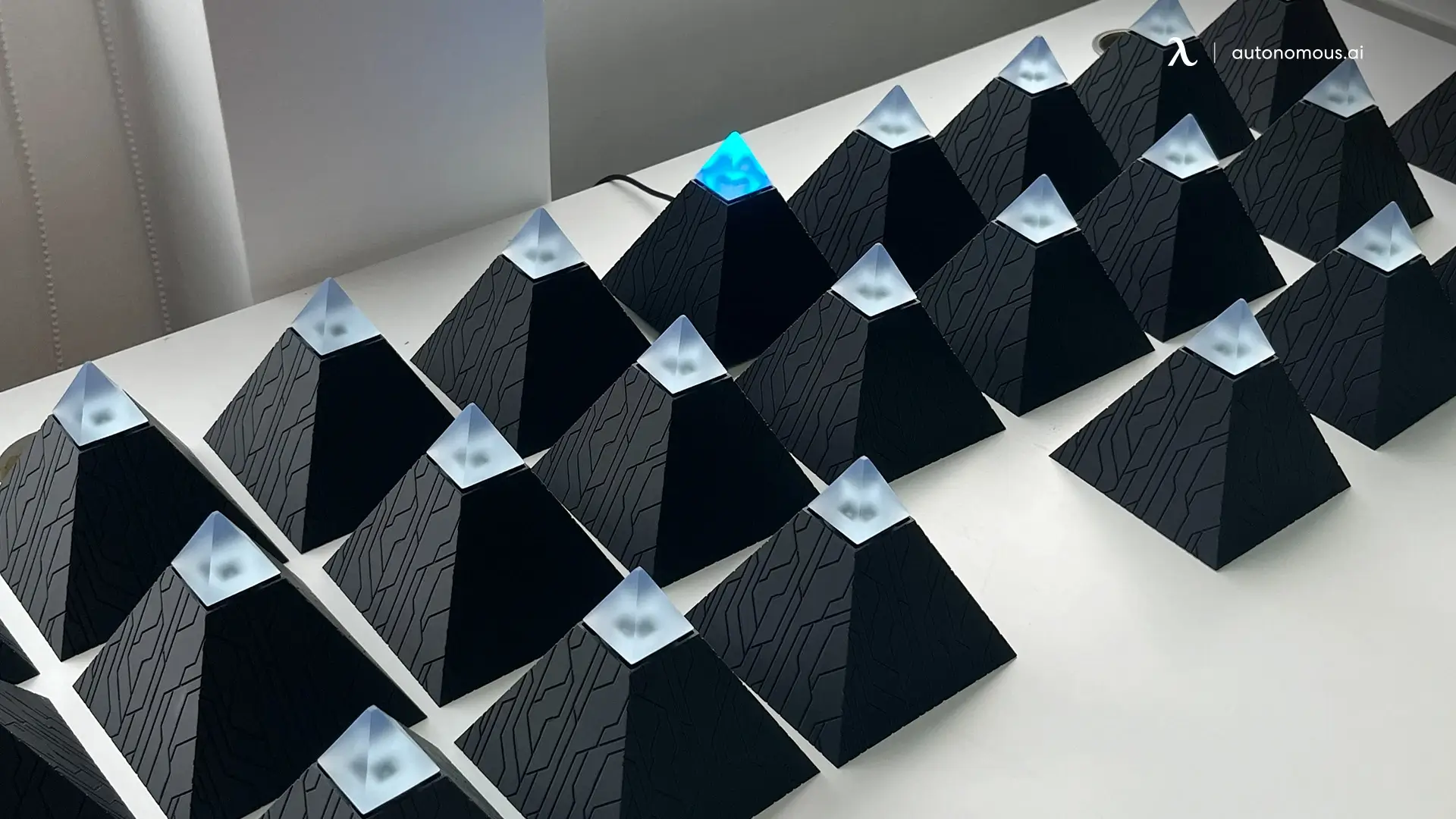

For teams evaluating this progression in a workplace context, the gap between a single-agent deployment like Autonomous Intern and a coordinated multi-agent system is a useful reference point — the architectural difference is concrete enough to make the tradeoff legible before committing to the more complex path.

Production-Ready Multi-Agent AI Use Cases

A production-ready multi-agent AI use case is one with documented deployments at scale, quantified outcomes, and a repeatable architecture that works across more than one organization.

Four use cases meet that standard today.

1. Customer Support Orchestration

Multi-agent coordination in customer support works by distributing the stages of a support interaction across specialized agents: one handling initial triage and intent classification, another pulling relevant documentation, a third generating a response, and a fourth managing escalation logic when the query exceeds automated resolution scope.

The architecture that repeats across documented deployments distributes each stage across purpose-built agents: triage and intent classification, documentation retrieval, response generation, and escalation logic each handled by a separate agent within a tightly defined scope. This is structurally different from single-agent customer support automation — closer in scope to what an AI assistant handles than what a coordinated multi-agent system is designed for.

The production outcomes that hold consistently: faster resolution, higher first-contact resolution rates, and lower operating cost per interaction. These depend on two conditions — escalation logic is explicit rather than emergent, and a human review path exists at every tier where agent confidence falls below a defined threshold.

2. Supply Chain Optimization

Supply chain coordination suits multi-agent architecture for a structural reason: the domain is already organized around discrete, interdependent functions with defined inputs and outputs at each stage. Demand forecasting, vendor management, logistics routing, and compliance checking each have clear boundaries — agent roles can be scoped precisely before deployment rather than approximated during it.

In practice, multiple AI agents working together across a supply chain can process inventory levels, supplier lead times, logistics constraints, and demand signals simultaneously, and surface tradeoffs between competing priorities in real time. Published operations management research identifies this natural decomposition as the primary reason supply chain use cases reach production faster than comparably complex enterprise applications.

For organizations evaluating how AI automation for business fits into supply chain workflows, the distinction between rule-based automation and multi-agent coordination is the first architectural decision — the two approaches handle variability in fundamentally different ways.

The production constraint worth noting: multi-agent coordination here requires clean, standardized data pipelines before it adds value. Organizations without that foundation find the coordination layer compounds existing data quality problems rather than resolving them.

3. Software Development Pipelines

Code production at scale involves functions that are distinct enough to warrant specialization but interdependent enough to require coordination — which is precisely the condition multi-agent AI systems are designed to handle.

The architecture assigns discrete roles to individual agents: requirements analysis, code generation, test writing, code review, and documentation, operating in sequence with handoff validation between stages. MetaGPT's role-simulation framework is the most documented open example of this pattern, modeling a complete software team where agents follow standardized operating procedures across the full development cycle.

What the deployment evidence shows consistently: the review and validation agents — not the generation agents — are where the quality gain occurs. Generation agents produce output at speed. Validation agents catch what generation agents miss. Removing the validation layer to reduce cost or latency reliably degrades output quality to below single-agent baselines.

4. Enterprise Data Analysis

Data analysis pipelines involve stages that are sequential by necessity but do not require the same agent to handle all of them — making this a natural candidate for multi-agent AI deployment.

Ingestion, cleaning, transformation, analysis, and report generation each become the responsibility of a specialized agent, with each stage producing a validated output before the next begins. The advantage over single-agent approaches is parallelism across data sources and specialization within each stage.

The governance requirement is non-negotiable in regulated industries: audit trails for every agent action, human review at the interpretation stage, and clear data handling protocols at every point where agents access sensitive information — the same principles that underpin AI privacy and security in any agentic deployment. Deployments that have bypassed that checkpoint have produced confident, well-formatted, incorrect conclusions — a failure mode that is harder to catch precisely because the output structure appears sound.

5. What Makes These Use Cases Work

Three structural characteristics appear consistently across production-ready multi-agent deployments.

Bounded agent roles. Every agent has a narrow, explicitly defined function. Agents with broad or overlapping mandates produce coordination failures — conflicting outputs, redundant actions, and cascading errors that are difficult to trace.

Explicit handoff conditions. The conditions under which one agent passes work to the next are defined in advance, not inferred. When handoff logic is left to emergent behavior, the system is reliable in testing and unpredictable in production.

Human checkpoints at high-stakes decision nodes. No production-ready multi-agent system operates without defined points where a human reviews or redirects before a consequential action is taken. Full autonomy at scale, in any of these four use cases, is not what is actually running in production — regardless of how it is described in vendor documentation.

Emerging Multi-Agent AI Use Cases

An emerging multi-agent AI use case has credible pilot evidence and sound underlying architecture, but has not yet achieved standardized deployment across organizations.

Three use cases are at this stage.

1. Healthcare Clinical Coordination

Clinical environments involve exactly the kind of parallel, interdependent workload that multi-agent AI systems are designed for scheduling — including the coordination work that an AI scheduling assistant handles at the individual level — prior authorization processing, clinical note analysis, and resource allocation across departments.

The pilot evidence supports the architecture. The deployment reality is more complicated. A field study co-authored by researchers at MIT Sloan, Harvard Medical School, and Mass General Brigham — based on the deployment of a clinical AI agent for immune-related adverse event detection — found that less than 20% of total project effort went to model development and prompt engineering. The remaining 80% was consumed by data integration, model validation, governance, and workflow integration. The agent performed as designed. The infrastructure required to support it reliably was where the work actually lived.

Data standardization and institutional governance requirements are the binding constraints — not the technical capability of the agents themselves.

2. Financial Services Personalization

The personalization problem in financial services — delivering guidance that reflects an individual's account data, behavioral patterns, market conditions, and regulatory constraints simultaneously — maps well onto multi-agent AI coordination, where each input stream can be monitored by a specialized agent and outputs reconciled before a recommendation surfaces.

Early pilot results show genuine value in this layer. The constraint blocking production scale is not technical. AI governance frameworks across multiple jurisdictions require that automated financial decisions affecting consumers be explainable, auditable, and contestable — a standard that current multi-agent frameworks do not yet meet consistently. The same architecture that makes multi-agent AI capable here — distributed reasoning across specialized agents — makes the audit trail structurally harder to produce. Production readiness depends on regulatory frameworks establishing those standards, not on further development of the agents themselves.

3. AI Workspace Productivity

For knowledge workers, the appeal of multi-agent AI is straightforward: instead of manually switching between email, calendar, documents, and task tools throughout the day, multiple agents handle that coordination in the background — each responsible for a specific function, passing work between them without human intervention.

The challenge is not the agents. It is the infrastructure connecting them. Agents that perform reliably within a single platform degrade when crossing into another system with different APIs or permission structures. The coordination overhead multi-agent AI is supposed to eliminate often reappears at those integration boundaries instead — the problem shifts rather than disappears.

The AI secretary function illustrates where the baseline currently sits: individual-level coordination across a defined set of platforms, handled by a single well-configured agent rather than a distributed system. Autonomous Intern operates at that boundary — handling communication, scheduling, and task coordination across Telegram, Slack, and WhatsApp as a single-agent deployment. For teams evaluating where multiple AI agents working together would add proportionate value beyond that baseline, the integration layer is the first constraint to assess, not the last.

Production readiness here depends on resolving that integration layer at the infrastructure level — not on further development of the agents themselves.

Overpromised Multi-Agent AI Use Cases

An overpromised multi-agent AI use case is one where the technology has been demonstrated in controlled conditions but cannot yet be deployed reliably in production — due to governance gaps, regulatory constraints, or reliability failures that compound at scale.

Three use cases fall into this tier.

1. Fully Autonomous Legal and Compliance Review

The architectural case for multi-agent AI in legal and compliance work is straightforward: contract analysis, regulatory monitoring, risk flagging, and documentation each map cleanly onto specialized agent roles. The problem is not the architecture — it is what happens when that architecture operates without reliable human oversight at consequential decision points.

Current multi-agent systems in legal contexts perform well on pattern recognition: identifying clause types, flagging deviations from standard language, surfacing regulatory references. Where they produce unreliable outputs is in the judgment layer — interpreting ambiguous language, weighing competing obligations, and assessing risk in context. These are not edge cases in legal work. They are the core of it.

When a multi-agent system produces an incorrect legal interpretation that informs a business decision, accountability is genuinely unclear — across the agent framework, the model provider, and the organization that deployed it. No jurisdiction has resolved this. Until it is, autonomous legal review at the decision-making level carries organizational risk that the efficiency gain does not offset.

What is production-ready: multi-agent AI functioning as an AI-powered legal assistant — supporting drafting and review tasks, with human sign-off required before any output is acted upon.

2. Autonomous Financial Decision-Making at the Transaction Level

The capability case for multi-agent AI in financial services is real: agents coordinating across market data, account history, behavioral signals, and risk models can process more inputs simultaneously and update recommendations faster than any single-agent system. The question is not whether the technology works — it is whether it can operate autonomously at the transaction level. Across most regulatory environments, it cannot.

The constraint is structural, not technical. AI governance frameworks across multiple jurisdictions require that automated financial decisions affecting consumers be explainable, auditable, and contestable. Distributed reasoning across specialized agents — the same architecture that creates the capability — makes producing that audit trail harder, not easier. Each agent contributes a fragment of the final decision. Reconstructing that into a coherent, contestable rationale is an unsolved engineering and governance problem, not a near-term product release.

What is production-ready sits a tier below full autonomy: an AI-powered financial assistant supporting human advisors — surfacing recommendations, flagging anomalies, preparing analysis — with human authorization required before any decision is executed.

3. Cross-Organizational Agent Interoperability

Cross-organizational agent interoperability refers to the ability of AI agents from different organizations and systems to discover each other, negotiate tasks, and collaborate autonomously across institutional boundaries — a capability that remains in early-stage protocol development with no standardized enterprise deployment path.

Anthropic's Model Context Protocol and Google's Agent-to-Agent protocol are both designed to standardize multi-agent coordination across systems and organizations. Both are real, actively developed standards. Neither has achieved the enterprise adoption or interoperability testing depth required to make cross-organizational agent collaboration reliable in production.

The trust and governance layer is further behind. For agents to transact across organizational boundaries autonomously, shared frameworks for liability, data handling, decision authority, and audit are required. Those frameworks do not exist in any standardized form.

Organizations tracking this space should monitor protocol adoption rates and interoperability testing outcomes as the leading indicators of when this use case moves from overpromised to emerging — that progression, when it begins, is likely to move faster than the current state suggests.

How to Evaluate Whether Multi-Agent AI Is Right for Your Organization

The right question before committing to a multi-agent AI system is not whether the technology works — in specific contexts, it does. The question is whether the problem in front of you actually requires it.

Six questions structure that evaluation:

- Is the task genuinely too complex for a single well-configured agent?

Multi-agent coordination adds overhead — in orchestration, observability, cost, and debugging complexity. That overhead is only justified when the task cannot be handled within a single agent's context window, tool access, or reasoning capacity. Most knowledge worker workflows that feel complex are still within the scope of a well-configured personal AI assistant — the right baseline to exhaust before adding coordination overhead.

- Can you define clear, bounded roles for each agent before building?

Agent roles must be defined precisely before deployment, not approximated during it. If you cannot articulate what each agent is responsible for and where its responsibility ends, the architecture is not ready to build.

- Do you have the observability infrastructure to monitor agent behavior in production?

Multi-agent AI systems fail in ways that single agents do not — cascading errors, emergent behaviors at handoff points, coordination failures that are difficult to trace. Monitoring each agent's inputs, outputs, and handoff conditions requires infrastructure investment that organizations consistently underestimate before deployment.

- Is there a human-in-the-loop checkpoint at every high-stakes decision node?

Every production-ready multi-agent system in this audit includes explicit human review at consequential decision points. Removing those checkpoints to reduce latency or cost has consistently produced failure modes more expensive than the efficiency gains they were meant to create.

- Can you quantify what success looks like within 90 days?

Define the metric before deployment — resolution rate, processing time, cost per output. Multi-agent AI systems without a measurable outcome defined upfront tend to expand in scope and cost without producing verifiable value.

- Would a well-configured single agent handle this adequately?

This is the question most evaluations skip. Multi-agent architecture is not an upgrade from single-agent deployment — it is a different tool for a different class of problem. Most knowledge worker workflows that feel complex are still within the scope of a well-configured personal AI assistant, which is the right baseline to exhaust before adding coordination overhead.

What to Watch: Multi-Agent AI in 2026 and Beyond

Three developments will determine how quickly the maturity classifications in this article shift — and which use cases move tiers first.

- Agent communication protocol standardization

Anthropic's Model Context Protocol and Google's Agent-to-Agent protocol are the two most actively developed standards for how agents communicate across systems. The pace at which these achieve enterprise adoption — and whether they converge toward interoperability rather than fragment into competing ecosystems — is the leading technical indicator for when cross-organizational multi-agent coordination becomes viable. Organizations evaluating multi-agent AI vendors should treat protocol alignment as a selection criterion now.

- Regulatory frameworks for autonomous AI decisions:

The financial services and legal use cases in the overpromised tier are not waiting on technical development — they are waiting on regulatory clarity around explainability, auditability, and liability. When that clarity arrives, those use cases are likely to move from overpromised to emerging faster than the current state suggests. Organizations in regulated industries should be building governance infrastructure now rather than waiting for the regulatory trigger.

- Inference cost trajectory:

The economics of running multiple AI agents working together shift materially as inference costs decline. Several emerging use cases are constrained partly by cost — where coordination overhead and per-agent inference cost exceed the efficiency gain at current pricing. As that cost curve drops, the production-ready tier will expand beyond what technical development alone would predict. Organizations building business cases for multi-agent AI today should model cost scenarios at both current and projected inference pricing before making infrastructure commitments.

FAQs

What is a multi-agent in AI?

Multi-agent AI is a system where multiple AI agents work together to complete a task, each handling a specific role. These agents communicate, coordinate, and pass outputs between each other to achieve a shared goal. It is designed for complex workflows that a single agent cannot handle efficiently.

How does a multi-agent AI system work?

A multi-agent AI system works by breaking a task into smaller subtasks and assigning each to a specialized agent. An orchestration layer manages communication, sequencing, and conflict resolution between agents. This allows parallel processing and more scalable problem-solving.

What is the difference between single-agent and multi-agent AI?

Single-agent AI handles a task end-to-end within one system, while multi-agent AI distributes work across multiple specialized agents. Multi-agent systems scale better for complex workflows but introduce coordination overhead and higher cost. They are not a default upgrade from single-agent setups.

What are the benefits of multi-agent AI systems?

Multi-agent AI systems enable specialization, parallel processing, and built-in redundancy across agents. This can improve scalability and performance in complex workflows. However, these benefits only appear when coordination is tightly controlled.

Is multi-agent AI better than single-agent AI?

Multi-agent AI is not inherently better than single-agent AI—it is suited for a different class of problems. For bounded or well-defined tasks, a single agent is often more efficient and easier to manage. Multi-agent systems are justified only when complexity requires coordination.

Are multi-agent AI systems reliable in production?

Multi-agent AI systems can be reliable when agent roles are bounded, handoffs are explicit, and human review exists at critical steps. Fully autonomous operation at scale is still limited in most domains. Most production systems include human-in-the-loop checkpoints.

How do you evaluate if multi-agent AI is right for your use case?

Evaluate multi-agent AI by asking whether the task truly requires decomposition, whether roles can be clearly defined, and whether success can be measured within a short timeframe. You also need infrastructure to monitor agent behavior. If a single agent can handle the task, multi-agent is usually unnecessary.

What frameworks are used to build multi-agent AI systems?

Common frameworks for multi-agent AI include MetaGPT, AutoGPT, and LangChain. These tools help manage agent roles, memory, and coordination logic. The choice depends on system complexity and integration requirements.

Is multi-agent AI the same as agentic AI?

Multi-agent AI and agentic AI are related but not the same. Agentic AI refers to systems that can act autonomously toward a goal, while multi-agent AI refers to multiple agents coordinating within a shared system. A multi-agent system can be agentic, but not all agentic systems involve multiple agents.

Conclusion

Multi-agent AI is not a single technology with a uniform maturity level — it is an architectural approach whose value depends entirely on whether the problem requires it. The production record is real in specific domains. The gap between that record and broader vendor claims is equally real.

The most useful frame for any organization evaluating multi-agent AI use cases is whether the deployment conditions are in place: bounded agent roles, explicit handoff logic, observable behavior, and human oversight at consequential decision points. Where those conditions exist, the outcomes are measurable. Where they do not, the architecture adds complexity without proportionate return.

The tier classifications here will shift as protocol standards mature, regulatory frameworks consolidate, and inference costs decline. The evaluation logic will not.