AI-Powered Legal Assistant: Practical Guide for Lawyers

Table of Contents

Legal work has always been time-intensive. Research, drafting, contract review, compliance tracking — each task demands precision and hours that most legal professionals do not have to spare. AI-powered legal assistants are changing that equation. These tools apply artificial intelligence to the most time-consuming parts of legal work, helping attorneys, paralegals, and legal teams move faster without sacrificing accuracy.

This guide covers what AI-powered legal assistants are, how they work, their core use cases, and what to consider before adopting one.

What Is an AI-Powered Legal Assistant?

An AI powered legal assistant is software that uses artificial intelligence — specifically large language models, natural language processing, and retrieval-augmented generation — to automate and augment legal workflows. Rather than replacing legal judgment, these tools handle the research, drafting, review, and administrative tasks that consume a disproportionate share of a legal professional's working hours.

Unlike general-purpose AI chatbots, an AI legal assistant is grounded in vetted legal sources: case law databases, statutes, regulations, and often a firm's own document repository. That grounding is what separates a useful legal tool from one that generates plausible-sounding but unreliable output.

Adoption is no longer limited to large firms. Solo practitioners, in-house counsel, paralegals, and legal operations teams are integrating these tools into daily workflows — not as a future investment, but as current infrastructure.

- AI Legal Assistant vs. Traditional Legal Research Tools

Traditional legal research tools operate on keyword logic. A researcher inputs specific terms, filters by jurisdiction or date, and manually reads through results to extract what is relevant. The process is reliable but slow, and the quality of output depends heavily on how well the researcher frames the query.

An AI-powered legal assistant approaches the same task differently. A lawyer can ask a question in plain language — the same way they would ask a colleague — and receive a synthesized answer with cited sources drawn from relevant case law and statutes. The AI identifies conceptually similar cases even when terminology differs, surfaces related legal theories, and tracks emerging trends across jurisdictions.

The practical difference is not just speed. It is the shift from retrieving raw materials to receiving analyzed output — with the attorney's role moving from research execution to research validation.

What Can an AI-Powered Legal Assistant Handle?

Legal work spans a wide range of task types — some requiring deep expertise, others consuming time without demanding much judgment. AI legal assistants are most valuable in the second category, handling high-volume, process-driven work so attorneys can concentrate on the work that genuinely requires their training.

- Legal Research

Research has traditionally been one of the most time-intensive parts of legal practice. An attorney building a litigation strategy or advising on a novel issue might spend hours combing through case law before reaching a defensible position.

AI legal assistants compress that process. Attorneys can query in plain language, receive synthesized answers drawn from relevant statutes and case law, and follow citations directly to source material for verification. The attorney's role shifts from locating authority to evaluating it — a meaningful reduction in time without a reduction in rigor.

- Contract Review and Analysis

Contract review is typically the highest-volume, most repetitive task for both law firms and in-house legal teams. AI assistants can pre-screen agreements against a standard playbook, flag clause deviations, identify missing provisions, and surface risk indicators — before an attorney opens the document.

This does not remove the attorney from the process. It focuses their attention on what actually needs legal judgment rather than requiring them to read every line of every standard agreement before determining what warrants attention.

- Document Drafting

AI legal assistants can generate first-pass drafts of memos, demand letters, NDAs, engagement letters, and motion outlines from provided facts and templates. The output reflects the firm's tone, inserts standard language where applicable, and leaves judgment-dependent sections flagged for attorney input.

The operative word is first-pass. AI-generated drafts require review and carry the same professional responsibility as any other work product the attorney submits or sends.

- Due Diligence

In M&A contexts, due diligence requires reviewing large volumes of documents across a data room under time pressure. AI tools can categorize documents automatically, surface change-of-control clauses, flag hidden liabilities, and map document versions — tasks that would otherwise require significant paralegal and associate hours to complete manually.

- Compliance Monitoring

Regulatory requirements shift continuously, particularly for organizations operating across multiple jurisdictions or in heavily regulated industries. AI legal assistants can monitor for relevant regulatory changes, flag updates that affect existing policies, and identify gaps between current practice and new requirements — reducing the risk of compliance failures that stem from information lag rather than negligence.

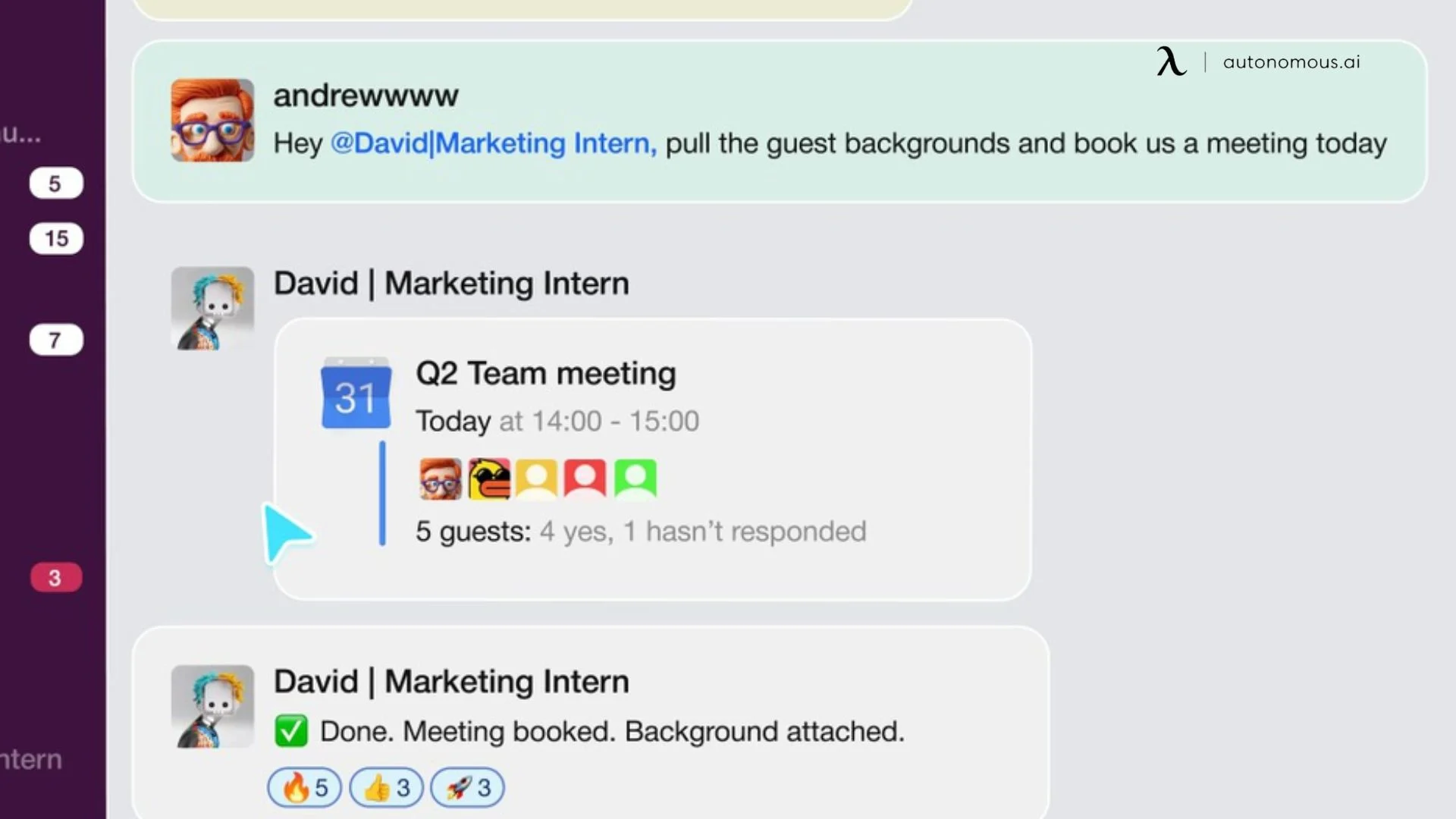

- Legal Request Intake and Triage

For in-house legal teams, managing the inflow of requests from across the business is a structural challenge. As request volume scales with company growth, manual triage becomes unsustainable. AI-powered intake systems — the same logic behind tools like an AI scheduling assistant that manages competing calendar demands — capture requests from whatever channel they arrive in, classify them by type and urgency, and route them without requiring manual intervention.

Who Is an AI Powered Legal Assistant For?

The answer has broadened considerably in recent years. AI legal tools were initially adopted by large firms with dedicated technology budgets. That is no longer the primary profile. The range of professionals integrating these tools into daily practice now spans firm size, role, and legal context.

- Solo Attorneys and Small Firms

A solo practitioner billing 40 hours a week is also doing the work of a researcher, a paralegal, and an office administrator. There is no associate to delegate research to, no paralegal to handle first-pass contract review, no ops team managing intake. Every non-billable hour spent on those tasks is an hour not spent on client work — and there is a hard ceiling on how many hours exist in a day.

AI legal assistants address this at the structural level. Research that previously required two hours of case law review can be reduced to a verified summary with cited sources. Standard contracts can be pre-screened before the attorney opens them. Routine correspondence can be drafted from a prompt. None of this eliminates the attorney's role — it eliminates the parts of the role that do not require a law degree to initiate.

- In-House Legal Teams

In-house legal teams operate under a pressure that private practice does not — they are evaluated not just on legal outcomes but on speed. When contract turnaround takes two weeks, when employment questions go unanswered for days, when business units start routing around legal to avoid the delay, the legal function stops being perceived as a resource and starts being perceived as a bottleneck. That perception, once established, is difficult to reverse and creates internal friction that compounds the operational problem.

The underlying cause is rarely incompetence — it is structural capacity. A two- or three-person legal team at a growing company is absorbing request volume that scales with headcount, revenue, and complexity, without a corresponding increase in legal staff. Proactive AI tools address this by absorbing the intake, triage, and routine review work that creates the backlog — not by replacing legal judgment, but by ensuring that legal judgment is not being spent on work that does not require it.

- Small Business Owners and Non-Lawyers

Most small business owners are not looking for a lawyer when they search for help with a contract or a dispute — they are looking for enough clarity to make an informed decision about whether they need one. That distinction matters. The gap is not access to legal representation; it is access to basic legal comprehension at the moment the question arises, before the cost and friction of a formal consultation is justified.

AI legal assistants serve that function. A business owner reviewing a vendor agreement can identify which clauses warrant concern before engaging an attorney, arriving at any subsequent consultation with specific questions rather than a general request to "look this over." That preparation changes the quality and cost-efficiency of the legal engagement itself — and in straightforward matters, it may resolve the question without one being necessary at all.

- Paralegals and Legal Operations Professionals

Paralegals and legal ops professionals are often the most direct beneficiaries of AI legal tools, because the tasks AI handles most effectively — document organization, research synthesis, intake coordination, first-pass drafting — are precisely the tasks that consume the largest share of their working hours.

The more consequential shift is qualitative. When AI absorbs the high-volume, lower-judgment work, the paralegal's capacity moves toward tasks that require institutional knowledge, client relationship context, and judgment that cannot be systematized: complex document review, deposition preparation support, managing the edge cases that fall outside any automated workflow. That is a more defensible and more professionally valuable position — and one that AI makes more accessible rather than less.

What to Look for in an AI-Powered Legal Assistant

The market for AI legal tools has expanded fast enough that meaningful quality differences exist between products occupying the same category. Evaluating them on feature lists alone is insufficient — the variables that determine whether a tool actually gets used consistently are largely operational, not technical.

- Data Security and Confidentiality

Attorney-client privilege creates a data handling obligation that does not exist in most other professional contexts. Before any AI tool touches client information, the relevant questions are specific: Does the vendor use client data to train its models? Where is data stored and under which jurisdiction's privacy law?

- Source Grounding and Citation Transparency

An AI legal tool that cannot show its work is difficult to trust professionally. Output grounded in verified legal databases — with citations that link directly to source material — allows the attorney to verify before relying. Output generated without that grounding requires the attorney to independently confirm every claim, which eliminates most of the time benefit.

The practical standard: if the tool cannot tell you where a legal conclusion came from, treat the output as a starting point for research, not a research result.

- Integration with Existing Workflow

The same integration standard that applies to an AI secretary managing administrative workflows applies here: a tool that requires attorneys to leave their existing environment to use it faces a structural adoption ceiling regardless of its capabilities. The most effective AI legal tools embed where legal work already happens — inside document management systems like iManage or NetDocs, within email, inside contract repositories — rather than requiring a separate login and context switch for every task.

Accuracy Limitations and Hallucination Risk

No current AI legal tool is immune to generating confident but incorrect output. The difference between tools lies in how they mitigate this risk — through RAG architecture, through citation requirements, through human review prompts built into the workflow — and how transparently they communicate the limitation to users.

Any evaluation process should include testing the tool against known legal questions with verifiable answers, not just assessing interface quality and feature breadth. How the tool performs on edge cases and ambiguous queries is more informative than how it performs on straightforward ones.

How Autonomous Intern Fits into a Legal Professional's Workflow

Most AI legal tools are built for the firm's infrastructure — enterprise contracts, legal database subscriptions, DMS integrations. They are designed for scale and priced accordingly. For solo attorneys, small firm lawyers, and lean in-house teams, that pricing model often makes adoption economically unjustifiable relative to the actual volume of work being processed.

Autonomous Intern occupies a different position. It is a personal AI assistant device that operates through the messaging channels already in use: Slack, WhatsApp, Telegram, Discord. No per-seat subscription. No implementation timeline. No separate platform to log into between tasks.

What it handles in a legal workflow is specific.

Contract review — flagging risks and missing clauses

Before a contract reaches the attorney for substantive review, Intern can read through the document and surface potential risk areas and missing standard clauses. This is not a replacement for legal analysis — the attorney's judgment on whether a flagged clause is actually problematic in context remains essential. What it removes is the preliminary read-through that precedes that judgment, which in a high-volume contract environment represents a meaningful reduction in time per document.

Case research — precedent lookup and statute summaries

Autonomous Intern can pull relevant precedents and summarize applicable statutes based on the case facts provided. For a solo practitioner without a research associate, this compresses the initial research phase — surfacing the relevant authority so the attorney begins their analysis from an organized starting point rather than a blank search. As with any AI-generated research output, citations and holdings require independent verification before reliance.

Client email drafts — status updates and next steps

Client communication is one of the highest-volume, lowest-judgment writing tasks in legal practice. Status updates, acknowledgment emails, request-for-information follow-ups — these consume time without requiring the level of attention that substantive legal work demands. Intern functions as an AI email assistant in this context — drafting from existing case context, in the attorney's tone, within the messaging channel where the communication is already happening.. The attorney reviews and sends; the drafting burden shifts.

Court filing deadlines — auto-tracking and reminders

Missed deadlines in litigation are not recoverable errors. Intern operates similarly to an AI scheduling assistant for deadline management — tracking court filing dates from case information provided and surfacing reminders ahead of due dates — operating as a persistent monitoring layer for the obligations that carry the most professional risk if they slip. This does not replace docketing software for high-volume litigation practices, but for solo attorneys managing a moderate caseload without dedicated docketing support, it closes a genuine gap.

Billing support — time entry summaries

Time entry is consistently one of the most deferred administrative tasks in legal practice. It accumulates, the details fade, and entries end up reconstructed from memory rather than recorded accurately. Intern generates time entry summaries from conversations and case activity, reducing the gap between the work happening and the entry being logged. More accurate time entries translate directly to more complete billing — a financial impact that is straightforward to measure.

Billable hours tracking — auto-logging from conversations

Related but distinct: Intern can track time spent per case automatically from conversation context, without requiring the attorney to initiate a timer or interrupt the work to record it. For attorneys who routinely underreport billable time because logging it requires a context switch, this addresses the structural reason the time goes unrecorded — and contributes to a more productive work environment by removing a low-value interruption from billable work hours.

Professional confidentiality — on-device data handling

Bar ethics obligations under Model Rule 1.6 require attorneys to take reasonable measures to protect client information — an obligation that extends to every AI tool handling case data, correspondence, or documents in practice. Cloud-based legal AI tools process that data through external server infrastructure, where confidentiality protection depends on vendor-level security controls and contractual privacy commitments.

Autonomous Intern runs on OpenClaw, an open-source AI agent framework that runs AI locally on the device. No client correspondence, case context, or document content routed through Intern is transmitted to external servers. For attorneys conducting due diligence on AI tools against their professional responsibility obligations, the distinction between on-device and cloud-based processing is architectural — and that is a more durable guarantee than a privacy policy.

.webp)

What AI-Powered Legal Assistants Can't Do

AI legal assistants have expanded what is operationally possible for legal professionals working under time and resource constraints. That expansion has limits — and understanding them is as important as understanding the capabilities.

- Hallucination & Output Verification

The most consequential failure mode is not an obviously wrong answer — it is a plausible one. A fabricated citation formatted correctly, attributed to a real court, with a holding consistent with the legal area being researched, can pass a surface review and reach a filing before the error is caught. This has occurred in documented legal proceedings, resulting in sanctions against attorneys who submitted AI-generated research without independent verification.

RAG-based tools reduce this risk by grounding output in verified sources, but do not eliminate it. Any AI-generated research, draft, or analysis requires attorney review before it is relied upon or submitted. AI output is a starting point, not a work product.

- Jurisdictional Coverage Gaps

Most AI legal research tools are built around U.S. federal and state law. Coverage of international jurisdictions, specialized regulatory frameworks, and local court rules varies significantly by platform. Attorneys practicing in niche areas or across multiple jurisdictions should verify the scope of a tool's legal database before relying on its research output for matters outside its primary coverage area.

- No Substitute for Legal Judgment

An AI legal assistant can identify that a limitation of liability clause is capped below company standards. It cannot determine whether accepting that cap is strategically appropriate given the relationship, the deal economics, and the negotiating context. Pattern recognition and synthesis are different from the contextual judgment legal practice requires at its most consequential moments — in litigation strategy, high-stakes negotiations, and matters where facts are ambiguous or law is unsettled.

- Professional Responsibility Obligations

AI adoption does not suspend existing professional obligations — it extends them into a new context. Attorneys remain responsible for supervising AI-generated work products, ensuring client communications meet professional standards regardless of how they were drafted, and maintaining competence in the technology being used. Several state bars have issued specific guidance on AI use covering disclosure obligations, supervision requirements, and confidentiality considerations. Staying current with that guidance is part of responsible adoption.

- Predictive Analytics

Litigation outcome tools analyze historical case data — judge tendencies, court patterns, fact profiles — to estimate probable outcomes. Their output reflects historical patterns, not guarantees. Systemic biases in historical data carry forward into model predictions, and novel fact patterns fall outside the reliable range of any model trained on prior decisions. Predictive analytics is one structured input among several — useful for calibrating risk, not for replacing the judgment litigation strategy requires.

FAQs

Is an AI-powered legal assistant worth it for small law firms?

Yes. An AI-powered legal assistant helps small law firms reduce non-billable work by automating research, contract review, and administrative tasks. This allows attorneys to focus more on client work while maintaining accuracy. With pricing models ranging from enterprise subscriptions to accessible options like one-time solutions such as Autonomous Intern, adoption is now practical across firm sizes.

Can I use AI to review contracts?

Yes. An AI-powered legal assistant can review contracts by identifying risks, missing clauses, and deviations from standard terms. It acts as a first-pass filter before attorney review. Attorneys must still verify and interpret the output to ensure legal accuracy and context.

AI legal assistant vs. paralegal — what’s the difference?

An AI legal assistant automates repetitive legal tasks, while a paralegal provides judgment, context, and client interaction. AI handles research, drafting, and document review at scale. Paralegals focus on nuanced work that requires discretion and professional accountability.

How do law firms use AI-powered legal assistants?

Law firms use AI-powered legal assistants for legal research, case law synthesis, contract review, due diligence, compliance monitoring, and drafting client communications, with larger firms integrating AI into existing systems like document management platforms and smaller firms adopting lightweight tools or personal AI assistants to streamline daily operations.

What is the best AI powered legal assistant?

The best AI-powered legal assistant depends on workflow, budget, and data security requirements. Enterprise tools offer deep integrations and legal databases. Simpler tools focus on speed and usability. The most effective option is the one used consistently within daily workflows.

Does AI legal software hallucinate — and how serious is the risk?

Yes. AI legal software can produce incorrect or fabricated citations, known as hallucinations. AI-powered legal assistants using retrieval-augmented generation reduce this risk by grounding outputs in real legal sources. Attorneys must still verify all AI-generated work before relying on it.

Is it ethical for lawyers to use AI legal assistants?

Yes. Lawyers can ethically use AI-powered legal assistants if they supervise outputs and protect client confidentiality. Attorneys must comply with professional obligations such as Model Rule 1.1. This includes understanding how the tool works and ensuring all work meets legal standards.

Is AI legal assistant software safe for client data?

It depends on the tool’s data architecture, as cloud-based AI legal assistants process data on external servers while on-device solutions handle data locally, offering stronger confidentiality protection and better alignment with professional obligations around client data security.

What are the risks of using AI in legal practice?

The main risks of using AI in legal practice include inaccurate or hallucinated outputs, over-reliance on automation, and potential data security issues, all of which require careful tool selection, proper verification of results, and ongoing attorney oversight to manage effectively.

Will AI replace paralegals?

No. AI-powered legal assistants will not replace paralegals but will change their role. AI automates routine tasks like research and document organization. Paralegals focus more on work that requires judgment, coordination, and client context.

Will AI take over lawyers?

No. AI-powered legal assistants will not replace lawyers. AI can automate structured tasks like research and drafting. It cannot replicate legal judgment, ethical responsibility, or strategic decision-making in complex matters.

Conclusion

AI-powered legal assistants have moved from experimental to operational. Research that took hours now takes minutes. Contracts get pre-screened before an attorney opens them. Deadlines are tracked automatically. Client correspondence gets drafted in the time it used to take to open a blank document.

The tools that deliver the most consistent value are the ones matched precisely to the task layer they are built for — legal database platforms for research and contract analysis, operational tools like Autonomous Intern for the surrounding workflow that consumes time without requiring legal judgment.

The starting point is not finding the most feature-complete tool available. It is identifying where time is actually being lost — and whether AI can close that gap without introducing professional responsibility risk in the process.

Spread the word