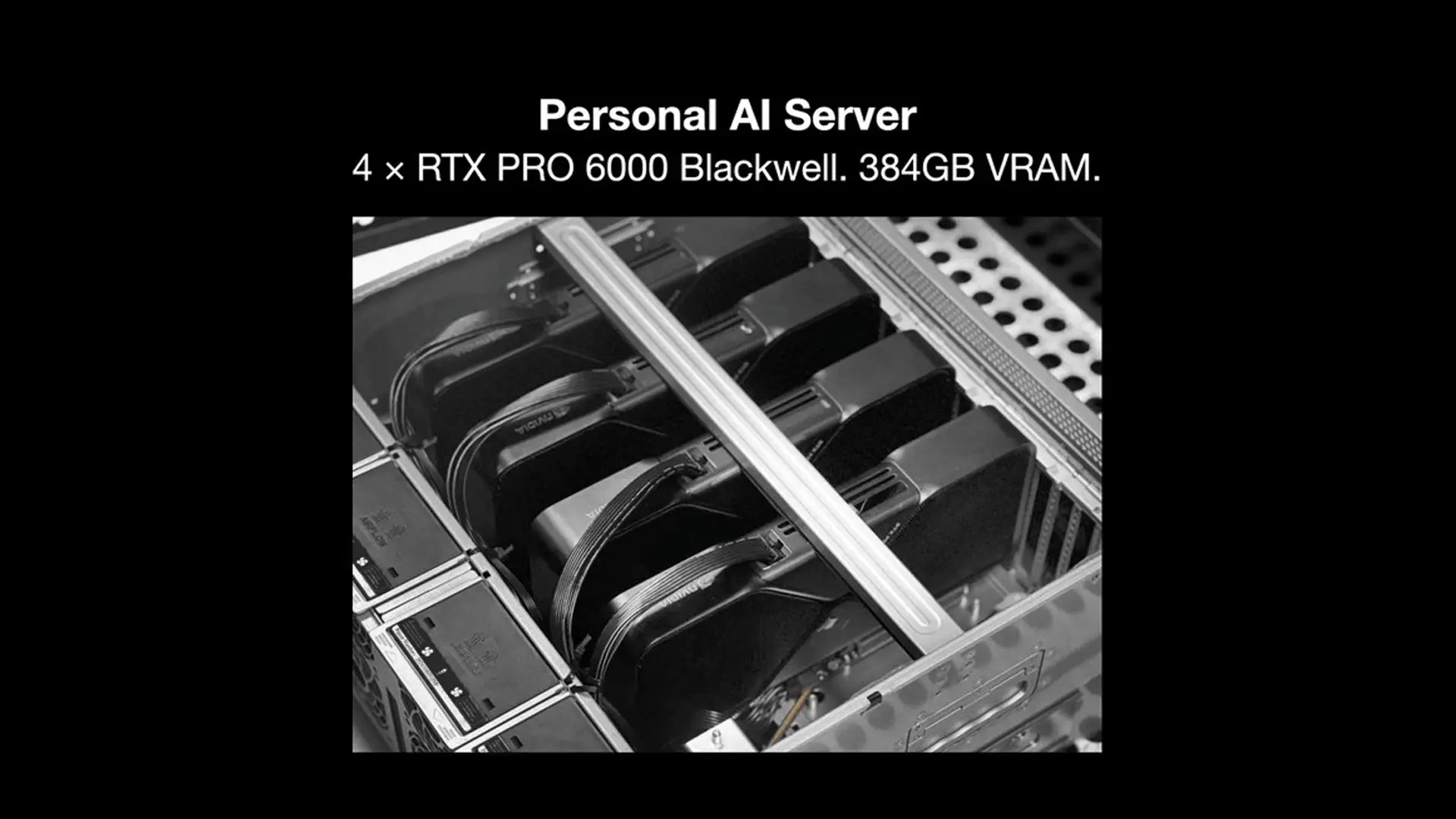

How to Build Your Own Personal AI Server

Build a Personal AI Server with 4× RTX PRO 6000 Blackwell and 384GB VRAM on an AMD EPYC platform. Full parts walkthrough from Autonomous Labs.

Goodbye ChatGPT. Goodbye Claude. Goodbye Gemini.

We just built a Personal AI Server. No API bills. Low latency. Private data. Everything stays on the box, in your room, on your network.

Here's the spec we landed on:

- 4× RTX PRO 6000 Blackwell

- 384GB VRAM

- AMD EPYC platform

If you want to build the same thing, here's the walkthrough — part by part, why we picked each one, and the gotchas we hit along the way.

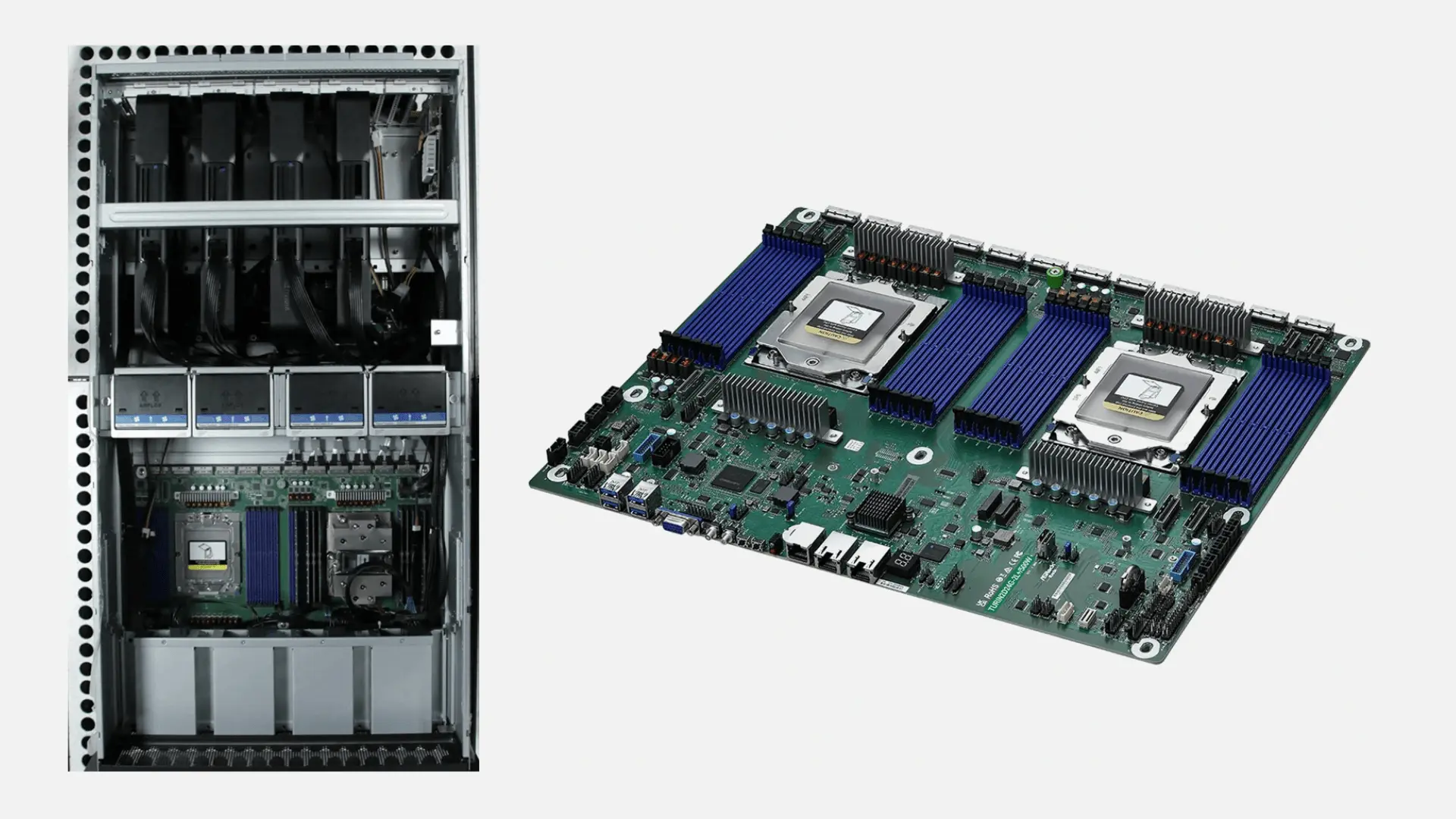

1. Mainboard: ASRock Rack TURIN2D24G-2L+

Dual SP5 sockets. 24 DDR5 DIMM slots. This is a server-class board, so it doesn't look like what you're used to from a desktop build — no traditional PCIe slots sticking out the side. Instead you get 20 MCIO connectors, bifurcated to deliver full PCIe Gen5 x16 to each GPU.

That's the whole point. Four cards, four full x16 connections, no compromises.

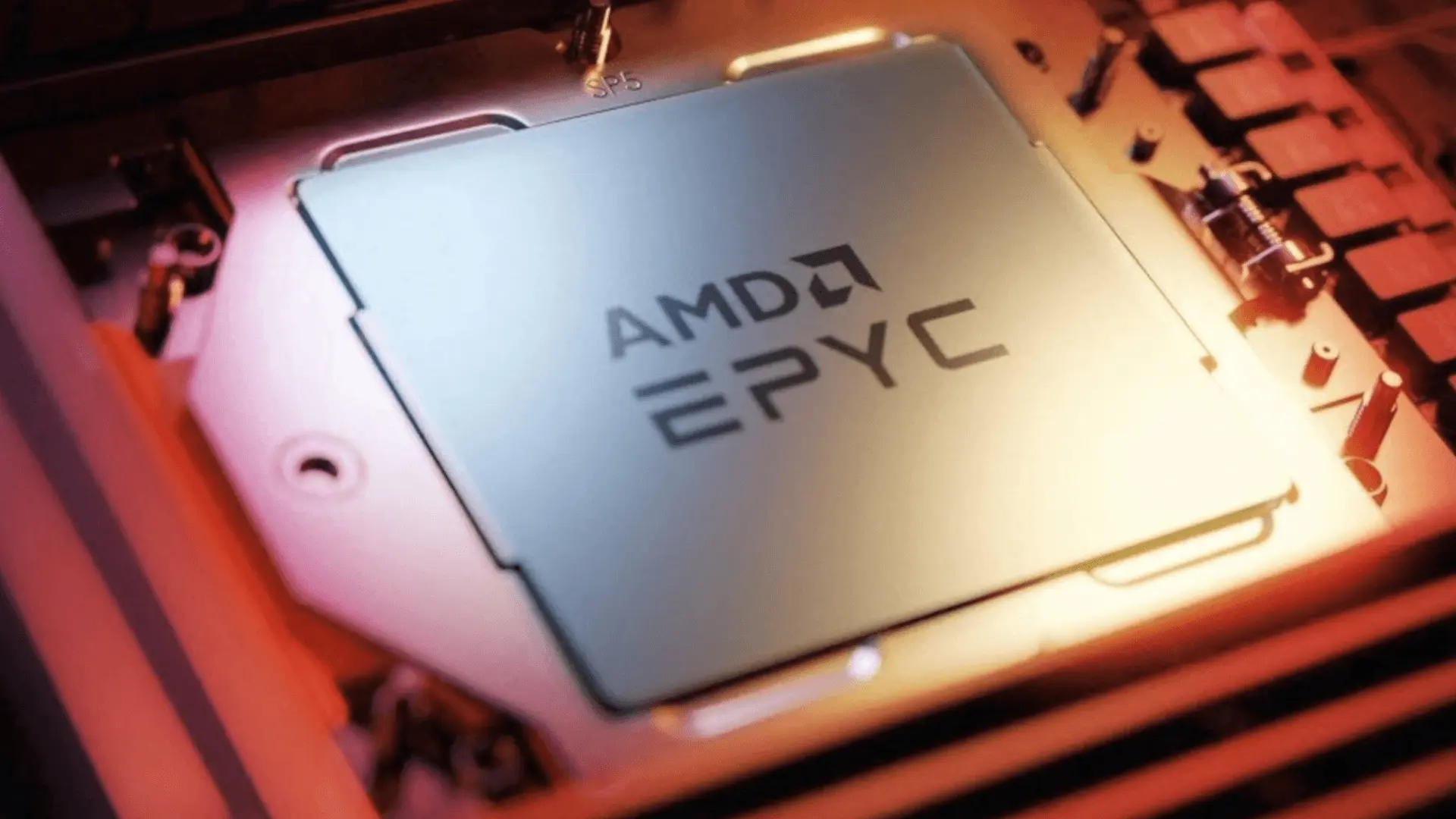

2. CPU: AMD EPYC 9124

16 cores. 200W TDP. Entry-level Genoa SKU — and yes, we picked it on purpose.

Here's the thing most people miss: every Genoa chip gives you 128 PCIe Gen5 lanes regardless of core count. For 4 GPUs, you're buying the chip for the lanes, not the cores. Don't overspend on a 64-core monster you'll never use for inference. The lanes are what move the model weights.

3. Power: 3× 2000W PSUs

6000W total. Yes, really.

Do the math: 4 GPUs at 600W = 2,400W from the cards alone. Add CPU and the rest of the platform and you're sitting around 3,000W under real load. Three PSUs split the load cleanly and give you headroom. You do not want to push a single PSU anywhere near its rated ceiling on a 24/7 inference box.

4. Memory: 8× 48GB DDR5 ECC RDIMM

384GB total.

The model itself lives in VRAM, so people sometimes underbuild system RAM and regret it. System RAM matters for context handling, KV cache offload, and serving multiple models at once. 384GB is what we'd call the practical floor for a 4-GPU inference build. If you want to serve a few models concurrently or push really long contexts, you'll appreciate every gigabyte.

5. Storage: Samsung 990 Pro 1TB NVMe

PCIe Gen4. Fine as a boot drive.

You'll want a second, much bigger NVMe for the model files. Modern open weights are huge — a single frontier-class model can eat 200–400GB. Plan multi-TB based on how many models you want hot on disk.

6. GPUs: 4× PNY RTX PRO 6000 Blackwell Workstation Edition

96GB GDDR7 ECC each. 384GB total VRAM. 600W per card. PCIe Gen5 x16.

This is the most VRAM you can get in any workstation card right now. FP4 support for inference, which is huge for running large models efficiently. Four of these in one box and you can host models that would normally need a small cluster.

7. CPU Cooler: COOLSERVER 2U for AMD EPYC SP5

6 heat pipes. Rated for 380W TDP. Comfortable margin over the 9124's 200W. Match the SP5 socket — desktop AMD or Intel coolers won't fit.

8. Chassis: 5U Rackmount Server Case

Includes cabling and a power distribution board.

Here's the part people underestimate: 4 GPUs in a sealed box generate 2,400W of heat. Most home-built rigs throttle hard without proper airflow architecture. A 5U rackmount built for multi-GPU is the right answer — front-to-back airflow, room for the cards, a PDB to handle three PSUs without a cable nest.

If you try to cram this into a tower case, you're gonna have a bad time.

You Just Built Your Own Personal AI Server

- 4× PNY RTX PRO 6000 Blackwell

- 384GB of VRAM

- AMD EPYC platform with full PCIe Gen5 x16 to every card

On this beast, you can run open frontier models like Qwen, Kimi, MiniMax, and Z.ai.

No API bills. Low latency. Private data.

Happy building.