.webp)

What Is OpenClaw? The Open-Source AI Agent Explained for 2026

Table of Contents

OpenClaw is the most-starred AI agent project of early 2026 — and one of the least understood. Unlike standard AI assistants that respond when prompted, OpenClaw executes tasks autonomously, retains memory across sessions, and connects to tools you already use, all processed locally on your own hardware. The capability is significant. The setup is not trivial.

The maintainer stated it directly: "if you can't understand how to run a command line, this is far too dangerous." This article breaks down what OpenClaw is, how it works, and who can realistically use it.

What Is OpenClaw?

OpenClaw is a free, open-source autonomous AI agent. The gap between AI agents vs AI assistants matters here: OpenClaw doesn't wait to be asked. It executes tasks on your behalf, maintains memory across sessions, and connects to the tools and services you already use — all processed locally on your own hardware.

Rather than a dedicated dashboard, OpenClaw functions as AI for live chat — the interface is the messaging apps you already use — WhatsApp, Telegram, Slack, or any of the 20+ supported channels. Under the hood, a Node.js process called the Gateway runs in the background — routing your messages to the connected LLM, executing the appropriate actions, and returning results to the same chat thread. That single process is what allows OpenClaw to stay active, respond to schedules, and initiate contact without waiting for your next message.

Interaction history and configuration are stored on your device, not on a remote server. The agent accumulates a working understanding of your preferences over time. You stop re-explaining recurring tasks. Execution gets more accurate the longer it runs.

Its capabilities extend through OpenClaw skills — modular add-ons that connect the agent to additional tools, automate specific workflows, or unlock new action types. The community-maintained registry, ClawHub, catalogs these and allows the agent to search and pull in new skills automatically as needed.

The software is MIT licensed and runs on macOS, Windows, and Linux. For always-on use without occupying a primary machine, running OpenClaw on a Mac Mini is a practical and widely used setup — low power consumption, silent operation, and sufficient hardware for continuous background tasks.

How Does OpenClaw Work?

When a message arrives — say, from Telegram or WhatsApp — the Gateway normalizes it through a channel adapter, which standardizes the input format regardless of which platform it came from. It then identifies the relevant session, loads the associated memory and skills, and passes the full context to the configured LLM. The model reasons over that context, determines what actions to take, and the Gateway executes them — a distinction that separates large language model vs. generative AI capabilities — determines what actions to take, and the Gateway executes them

That complete cycle — receive, route, reason, act, respond, persist — runs for every message.

The LLM itself is interchangeable. OpenClaw is model-agnostic by design, supporting Claude AI, GPT, Gemini, DeepSeek, and any open-source language model hosted locally via compatible endpoints. Switching models doesn't require reconfiguring the rest of the system. For users with stricter privacy requirements, the option to run AI locally through a runtime like Ollama means no data leaves the device — the entire stack operates offline.

Sessions are isolated per channel and per user, so conversations don't bleed into each other across platforms. Each session maintains its own history, memory, and agent context, persisted to disk so the agent survives restarts without losing continuity.

The OpenClaw AI agent extends its capabilities through skills — discrete modules that define what the agent can access and automate. Built-in capabilities include shell command execution, browser control, and file system management. Additional OpenClaw skills from ClawHub expand this further— browser automation, email workflows, calendar management, API integrations, and more. Each skill runs where the Gateway process runs, with tool restrictions enforced at the Gateway level before the LLM can act on them.

The Gateway also includes a local web dashboard at 127.0.0.1:18789 for monitoring conversations, managing sessions, and configuring skills without editing config files directly — useful for ongoing maintenance once the initial OpenClaw install is complete.

Why OpenClaw Matters Right Now

The timing of OpenClaw's rise isn't incidental. It arrived at the point where AI agent technology became capable enough to be genuinely useful, while remaining accessible enough to deploy without enterprise infrastructure. That combination — local-first, open-source, messaging-native — hadn't existed in a single project before, and the adoption curve reflected that.

- It represents a broader shift in how AI is being used

The move from prompt-response tools toward autonomous, persistent agents had been building well before OpenClaw appeared — but no single project had made it accessible at the individual user level until this one. What accelerated that shift was the combination of local execution, open licensing, and a messaging-native interface arriving at the same time. Each of those properties existed in other tools separately; OpenClaw was the first to ship them together in a form that non-enterprise users could actually run.

The result was adoption that spread faster than the documentation could keep up with — from individual developers to corporate Slack deployments to government policy discussions, within the span of a few months.

- Where it sits in the AI agent landscape

OpenClaw's position is specific. Unlike hosted agent platforms, it gives users direct control over infrastructure, data residency, and model selection — nothing is managed on a third party's servers by default. Compared to tools like AutoGPT or n8n, it is more opinionated about interaction: messaging apps are the primary interface, which reduces the setup friction for users who don't want to manage a separate dashboard.

It is not a substitute for Claude or ChatGPT for generative or conversational tasks — its differentiation is execution and persistence over sessions, not reasoning quality.

The Reality of Running OpenClaw Yourself

1. What The Setup Requires

OpenClaw needs Node.js 22 or later — earlier versions produce dependency failures that are difficult to trace back to Node as the root cause. Beyond that, you need a dedicated machine with continuous uptime, since the Gateway is a persistent process. Any interruption drops active sessions and halts scheduled tasks entirely.

Before the agent is operational, you are configuring API keys, gateway tokens, and channel credentials across separate config files. Each messaging platform has its own authentication requirements — Discord, for example, requires bot intent configuration in its Developer Portal before the agent can read messages at all.

2. Where Most Deployments Actually Break

What follows the install is a compounding set of failure points that account for the majority of abandoned deployments.

Config schemas change between releases without migration paths. Settings get renamed or removed, and an upgrade can leave the agent unable to start on a configuration that worked the previous day.

Memory storage compounds the problem over time. Without a pruning strategy configured manually, the memory store grows indefinitely until it either corrupts or fills the available disk. Separately, the default persistence mode writes to a single file without journaling — so any interrupted write, whether from a power cycle or a forced shutdown, produces a corrupted memory store that requires manual repair before the agent runs again.

3. The Security Exposure On A Default Install

The Gateway binds to all network interfaces out of the box rather than localhost, making it reachable from outside the local machine on a default install. API keys are stored unencrypted in the config file. ClawHub skills run as third-party code with whatever system permissions they declare in their metadata, and there is no central vetting process before a skill is published to the registry.

Cisco's security research team tested a third-party OpenClaw skill and confirmed it performed data exfiltration and prompt injection without producing any user-facing indication that it had occurred.

4. The Ongoing Operational Load

A stable deployment requires sustained attention well beyond the initial setup. The project ships frequent releases, several of which have been direct responses to disclosed vulnerabilities — making version updates a security requirement, not routine maintenance. Each upgrade carries the risk of config drift and requires a validation pass to confirm the agent starts cleanly on the updated schema.

There is no built-in health monitoring or alerting. A downed Gateway is typically discovered only when a scheduled task fails silently or a user notices the agent has stopped responding. Each additional service connected to the agent adds a credential management responsibility that compounds as the agent's scope expands, with no centralized view of what the agent currently has access to across all connected platforms

For developers, this operational load is expected and manageable. For the broader audience that finds OpenClaw most compelling — people looking for an AI assistant that handles their daily tasks — it is the reason most attempts stall before the agent sends its first message.

OpenClaw Without the Setup: How a Physical Device Changes Things

Everything described in the previous section — the Node.js requirements, the config files, the security hardening, the ongoing maintenance — assumes you are running OpenClaw yourself. A pre-configured device removes that assumption entirely.

The idea is direct: take the full OpenClaw stack — Gateway, model connections, messaging integrations — and ship it pre-installed on dedicated hardware. No terminal. No API key management. No server to provision or maintain. You plug it in, connect it to Wi-Fi, and text your agent through the messaging apps you already use.

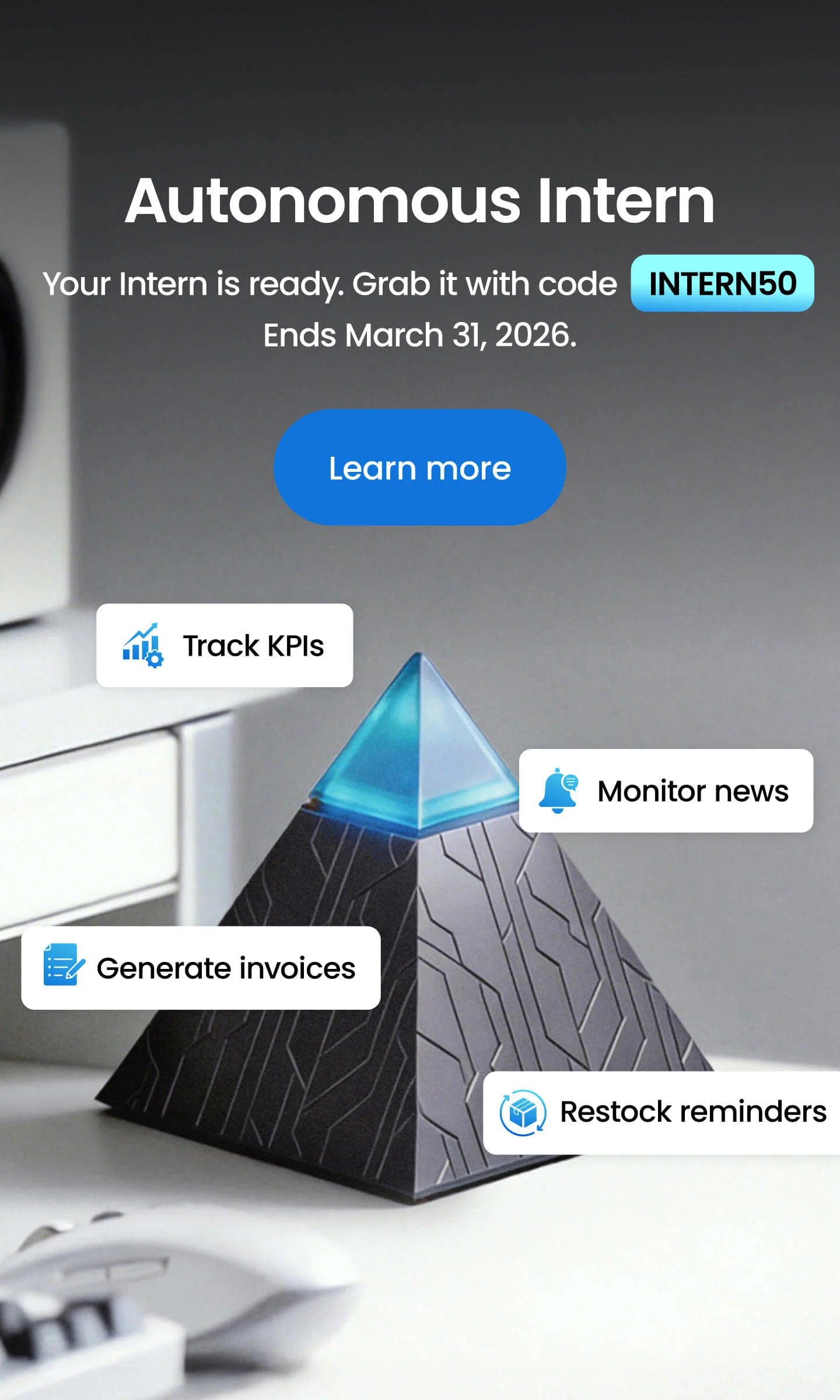

Autonomous Intern is built on exactly this premise — a personal AI assistant with the models and integrations pre-configured. It sits on your desk as a standalone unit, connects to your local network over Wi-Fi, and requires nothing installed on your computer or work machine to operate.

Data stays on the device. This is the architecture behind private AI assistants — conversations, session memory, and files remain on local storage. Conversations, session memory, and files are stored on local hardware and are not transmitted to external servers for processing or model training. Voice input works through connected chat platforms — Slack, iMessage, WhatsApp, and others — rather than through an onboard microphone, which means there is no ambient listening by design. Because the device requires no software installation on a host machine, it is compatible with SSO environments, managed devices, and corporate firewalls without IT approval.

The tradeoff against a self-hosted deployment is control versus operational overhead. A custom install allows deeper configuration — custom skills, specific model selection, network-level routing rules. A device like Intern abstracts that layer in exchange for a deployment path that requires no technical prerequisites. Both run the same OpenClaw engine. The difference is where the infrastructure responsibility sits.

Who Should Actually Use OpenClaw?

The right path into OpenClaw depends almost entirely on technical comfort and how much control you need over the underlying stack.

- Developers and Power Users

For anyone comfortable with a terminal and familiar with Node.js environments, the self-hosted route is the more capable option. Direct access to the Gateway configuration means full control over model selection, skill permissions, session routing, and network exposure.

Custom OpenClaw skills can be written and loaded locally without going through ClawHub, and the entire stack can be audited and modified at the code level.

- Solopreneurs and Small Business Owners

This is where OpenClaw's practical value is most immediate. A solopreneur running client communications, invoicing, research, and scheduling across multiple platforms is managing a workload that maps directly to what an autonomous agent handles well — recurring tasks, inbox management, calendar coordination, document drafting.

The agent's persistent memory means it builds context about ongoing work over time, reducing the instruction overhead on repeated tasks. For this profile, the device route removes the only real barrier to entry.

- Non-technical Professionals

Real estate agents, accountants, content creators, and operations staff in small teams represent a growing segment of OpenClaw users who need task execution, not infrastructure management.

The use cases are concrete: pulling property data and drafting listing emails, tracking expenses and generating reports, scheduling across time zones, monitoring inboxes for specific triggers. None of these require CLI access or custom configuration — they require a working agent with the right skills enabled, which a pre-configured device handles without additional setup.

- Who It Is Not For

OpenClaw is not a passive tool. It requires intentional setup — what the agent can access, what it is authorized to act on, and ongoing awareness of what it does on your behalf.

Users expecting a plug-and-play productivity shortcut without any configuration or oversight will find the reality more demanding. The agent's value scales directly with how deliberately it is configured — regardless of which deployment path is used.

.webp)

FAQs

What is OpenClaw and what does it do?

OpenClaw is an open-source autonomous AI agent that can execute tasks, maintain memory across sessions, and connect to external tools. Unlike typical AI chat assistants, OpenClaw runs continuously and can act on your behalf without needing a new prompt each time. It is designed to automate workflows across messaging apps, files, APIs, and other services.

How does OpenClaw work?

OpenClaw runs through a background process called the Gateway, which continuously listens for messages from connected platforms like Telegram or Slack. When a message arrives, OpenClaw routes it to a configured language model, determines the required actions, executes them through skills, and returns the results in the same chat thread. This loop allows the agent to reason, act, and store memory over time.

Is OpenClaw free to use?

Yes. OpenClaw is released under the MIT license, so the software itself is free and open source. However, users may still pay for AI model APIs, hosting infrastructure, or hardware depending on how they run the system.

Is OpenClaw difficult to install?

For many users, yes. Installing OpenClaw typically requires setting up Node.js environments, configuring API keys, connecting messaging platforms, and maintaining the Gateway process. Because of this complexity, many users look for preconfigured deployments instead of installing it manually.

Can OpenClaw run locally without sending data to the cloud?

Yes. OpenClaw can run fully locally when paired with local model runtimes such as Ollama. In this setup, OpenClaw processes conversations, memory, and reasoning directly on your device, meaning data does not leave your hardware.

Is OpenClaw safe to run on your computer?

OpenClaw can be safe when properly configured, but it requires careful security management. The system can execute commands and run third-party skills, so users typically restrict permissions and review installed modules. Secure deployments often run the agent on a dedicated machine rather than a primary workstation.

What is the easiest way to start using OpenClaw?

The easiest way to start using OpenClaw is with a preconfigured system where the OpenClaw software, models, and messaging integrations are already installed. This avoids the need to configure Node.js environments, API keys, and messaging credentials manually. Devices that run the OpenClaw stack out of the box allow users to start interacting with their AI agent immediately.

Do you need coding skills to use OpenClaw?

Yes, if you plan to install and manage OpenClaw yourself. The setup process usually involves command-line installation, configuration files, and managing API credentials for models and messaging platforms. For users without coding experience, a preconfigured OpenClaw device removes most of that complexity.

What is the best hardware for running OpenClaw?

Many users run OpenClaw on small dedicated systems such as a Mac Mini, mini PC, or home server. These machines can keep the Gateway process running continuously without interrupting a primary workstation. Dedicated hardware also improves reliability for scheduled tasks and long-running agent sessions.

Should you run OpenClaw yourself or use a dedicated OpenClaw device?

Running OpenClaw yourself gives full control over the system, including model selection, custom skills, and infrastructure setup. However, it also requires managing installations, updates, security, and ongoing maintenance. A dedicated OpenClaw device runs the same engine on preconfigured hardware, allowing users to access their AI agent through messaging apps without maintaining the underlying infrastructure.

Conclusion

OpenClaw represents a genuine shift in how AI is being used — not as a tool you query, but as an agent that works on your behalf, continuously, across the platforms you already use. The capability is real. So is the gap between what it can do and what most people can realistically set up and maintain on their own.

For developers and technically proficient users, the self-hosted OpenClaw install offers full control over the stack — model selection, skill configuration, network rules, and everything in between. For everyone else, the setup complexity and security overhead remain the primary barrier, which is exactly the problem a pre-configured device like Autonomous Intern is built to solve.

What the OpenClaw AI agent makes clear, regardless of how you run it, is that the shift toward persistent, autonomous agents is already underway. The question isn't whether this category of software matters — it's which deployment path fits your technical comfort level, your use case, and how much of the infrastructure you want to own.

Sag es weiter

.svg)